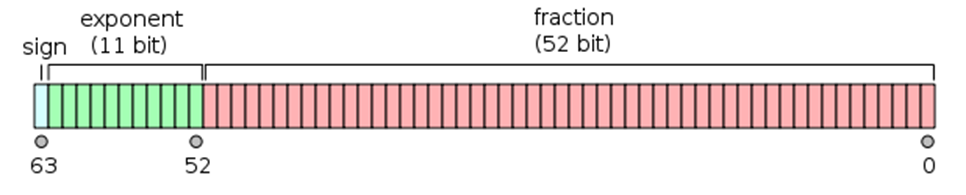

In Javascript, all numbers are encoded as double precision floating point numbers, following the international IEEE 754 standard. This format stores numbers in 64 bits, where the number, the fraction (AKA mantissa), is stored in bits 0 to 51, the exponent in bits 52 to 62, and the sign in bit 63.

Floating-point numbers are represented as binary (base 2) fractions. Regrettably, most decimal fractions cannot be represented exactly as binary fractions. The decimal floating-point numbers you enter are only approximated by the binary floating-point numbers actually stored in the machine. That being said, you’ll see that floating-point arithmetic is NOT 100% accurate.

0.2 + 0.1

0.300000000000000040.3 – 0.1

0.19999999999999998

1111.11+1111.11+1111.11+1111.11+1111.11

5555.549999999999

Even more, you can lose precision when performing operations, such as addition and subtraction, on decimal numbers with very different absolute value.

99999999999.0123 + 0.00231432423

99999999999.01462

There are more examples. Integer numbers between -(253 -1) and 253+1 are accurately represented. Beyond this threshold, not all integer numbers can be represented. You can think that in Javascript, integer numbers are accurate up to 15 digits.

Math.pow(2, 53) - 1

9,007,199,254,740,991 //Max positive safe integer in JavascriptNumber(999999999999999);

999999999999999

Number(9999999999999999);

10000000000000000

In this post, I’ll present two different strategies to overcome these precision issues: one for decimal numbers and another for integer numbers.

Strategy for Decimal Numbers

There are two things to tackle here, representation and handling of decimal numbers.

In order to prevent losing precision, decimal values must be serialized as strings and not as JSON numbers. But also, there should be a way to tell if a JSON string value corresponds to a decimal number or is merely a string. One solution could be to create a custom representation for decimal numbers, for instance:

amount: { _type: "BigDecimal",

_value_str: "1234.56"}

Having covered representation part, we need an alternative to Javascript numbers. There are several libraries, such as big.js, bignumber.js, decimal.js and bigdecimal.js, to address this issue. All of them provide arbitrary-precision decimal arithmetic (find benchmarks here). If you don’t need to perform complex arithmetic operations, such as logarithms, square roots, etc., and mostly, you do additions, subtractions, multiplications, and divisions; then big.js library could be the best choice.

//Creating a Big object (Throws NaN on an invalid value)

var amount1 = new Big(“0.1”); //

var amount2 = Big(“0.2”); // 'new' is optional

amount1.plus(amount2); // the result is a new Big object with value 0.3

The last piece of the solution would be an HTTP interceptor to convert decimal numbers from their custom JSON representation to big.js (or to the implementation you chose) objects and vice versa.

Strategy for Integer Numbers

As mentioned before, the safe range for JSON integers is -253 < x < 253. So, let’s analyze what can fit inside that range:

- 253 milliseconds has us covered for around ±300,000 years. Do you need to handle dates outside this range?

- Assuming that you represent DB table ids as integers. Are you going to insert in a table more than 9 quadrillion rows?

- In addition to DB tables ids, in case you use integers to represent sort orders, quantities, amount of days, etc. Do you need to support integer quantities over 9,007,199,254,740,991?

In most scenarios, we could declare that there is no problem. Our code breaks when provided with obscenely large numbers, but we simply do not use numbers that large and we never will.

So, as a solution for integer values, we’ll reject values outside of the safe range, even when they fit in a double. For this, you can use custom serializers/deserializers to prevent sending/receiving integer values outside of the safe range.

Summary

It’s very important to tackle the Javascript numeric precision issue sooner than later. Ideally, before you start writing the first line of code of your application. Once you realize that this issue affected your application, maybe it’s too late and some data has been corrupted.